Multimodal Affective Computing Market Report 2026

Multimodal Affective Computing Market Report 2026

Global Outlook – By Component (Hardware, Software, Services), By Modality Type (Facial Expression Recognition, Speech And Voice Analysis, Physiological Signals, Gesture And Body Language, Text And Sentiment Analysis, Multimodal Fusion Systems), By Deployment Mode (On-Premise, Cloud-Based), By Application (Emotion Recognition And Analytics, Human-Computer Interaction, Mental Health Monitoring And Therapy, Customer Experience Management, Training And Simulation, Adaptive Learning Systems), By End User (Healthcare And Life Sciences, Automotive And Transportation, Consumer Electronics And Smart Devices, Education And E-Learning, Retail And Customer Experience, Research And Academic Institutions, Government And Public Sector) – Market Size, Trends, Strategies, and Forecast to 2035

Multimodal Affective Computing Market Overview

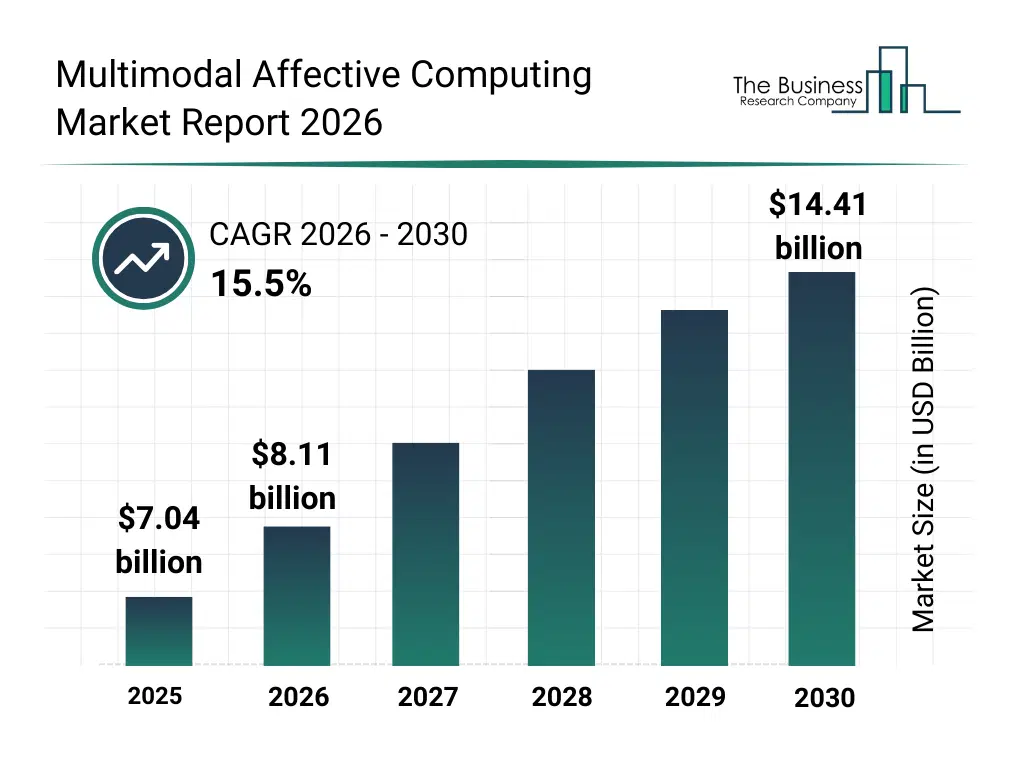

• Multimodal Affective Computing market size has reached to $7.04 billion in 2025 • Expected to grow to $14.41 billion in 2030 at a compound annual growth rate (CAGR) of 15.5% • Growth Driver: Growing Proliferation Of Internet Of Things (IoT) Devices Driving Growth In The Market Due To Increasing Demand For Accurate And Reliable Device Evaluation • Market Trend: Advancements In Multimodal AI Platforms For Enhanced Emotion Recognition • North America was the largest region in 2025 and Asia-Pacific is the fastest growing region.What Is Covered Under Multimodal Affective Computing Market?

Multimodal affective computing refers to a technology that analyzes and interprets human emotions using multiple data sources or modalities, such as facial expressions, voice tone, physiological signals, and text. Its primary purpose is to enable systems to recognize, respond to, and adapt based on human emotional states, enhancing human-computer interaction, personalized experiences, and decision-making in various applications. The main components of the multimodal affective computing market include hardware, software, and services. Hardware refers to devices such as cameras, microphones, wearable sensors, and edge systems used to capture and process emotional and behavioral signals. The modality types include facial expression recognition, speech and voice analysis, physiological signals, gesture and body language, text and sentiment analysis, and multimodal fusion systems and are deployed through on-premise and cloud-based modes. The applications include emotion recognition and analytics, human-computer interaction, mental health monitoring and therapy, customer experience management, training and simulation, and adaptive learning systems and caters to end users such as healthcare and life sciences, automotive and transportation, consumer electronics and smart devices, education and e-learning, retail and customer experience, research and academic institutions, and government and public sector.

What Is The Multimodal Affective Computing Market Size and Share 2026?

The multimodal affective computing market size has grown rapidly in recent years. It will grow from $7.04 billion in 2025 to $8.11 billion in 2026 at a compound annual growth rate (CAGR) of 15.2%. The growth in the historic period can be attributed to increasing demand for enhanced human-computer interaction, rising adoption of facial and speech recognition technologies, growing use of AI in customer experience management, expansion of wearable biometric devices, increasing investments in mental health technology.What Is The Multimodal Affective Computing Market Growth Forecast?

The multimodal affective computing market size is expected to see rapid growth in the next few years. It will grow to $14.41 billion in 2030 at a compound annual growth rate (CAGR) of 15.5%. The growth in the forecast period can be attributed to growing deployment of cloud-based emotion analytics platforms, rising demand for adaptive learning systems, increasing integration of multimodal systems in automotive safety, expansion of edge computing for real-time affect analysis, rising focus on emotion-aware virtual assistants. Major trends in the forecast period include increasing adoption of multimodal fusion systems, rising demand for real-time emotion recognition services, growing integration of wearable emotion monitoring devices, expansion of mental health monitoring applications, rising focus on personalized human-computer interaction.Global Multimodal Affective Computing Market Segmentation

1) By Component: Hardware, Software, Services 2) By Modality Type: Facial Expression Recognition, Speech And Voice Analysis, Physiological Signals, Gesture And Body Language, Text And Sentiment Analysis, Multimodal Fusion Systems 3) By Deployment Mode: On-Premise, Cloud-Based 4) By Application: Emotion Recognition And Analytics, Human-Computer Interaction, Mental Health Monitoring And Therapy, Customer Experience Management, Training And Simulation, Adaptive Learning Systems 5) By End User: Healthcare And Life Sciences, Automotive And Transportation, Consumer Electronics And Smart Devices, Education And E-Learning, Retail And Customer Experience, Research And Academic Institutions, Government And Public Sector Subsegments: 1) Software: Emotion Recognition Platforms, Behavioral Analysis Software, Facial Expression Analysis Tools, Speech And Voice Analysis Software, Multimodal Data Fusion Platforms 2) Hardware: Cameras And Imaging Sensors, Microphones And Audio Sensors, Wearable Biometric Sensors, Processing And Computing Units, Edge Computing Devices 3) Services: System Integration Services, Data Annotation And Labeling Services, Model Training And Optimization Services, Maintenance And Technical Support Services, Consulting And Custom Development ServicesWhat Is The Driver Of The Multimodal Affective Computing Market?

The growing proliferation of internet of things (IoT) devices is expected to propel the growth of the multimodal affective computing market going forward. IoT devices refer to physical objects with sensors and internet connectivity that collect and exchange data for automation and monitoring. The increase in internet of things (IoT) devices is driven by growing demand for smart automation, as businesses and consumers adopt connected devices to monitor, control, and optimize processes, improving efficiency, convenience, and real-time decision-making. Multimodal affective computing supports Internet of Things (IoT) device adoption by enabling devices to recognize, interpret, and respond to human emotions through multiple input channels, such as voice, facial expressions, and gestures. It enhances user interaction, personalization, and engagement, driving more intuitive and widely accepted IoT solutions. For instance, in October 2025, according to IoT Analytics, a Germany-based provider of market insights, the number of connected IoT devices reached 14% in 2025 and is projected to reach 39 billion by 2030. Therefore, the growing proliferation of internet of things (IoT) devices is driving the growth of the multimodal affective computing industry.Key Players In The Global Multimodal Affective Computing Market

Major companies operating in the multimodal affective computing market are Apple Inc., Google LLC, Microsoft Corporation, Samsung Electronics Co. Ltd., Sony Group Corporation, IBM Corporation, NVIDIA Corporation, Panasonic Corporation, Intel Corporation, SoftBank Group Corp., Qualcomm Technologies Inc., NEC Corporation, Xiao-I Robot Technology Co. Ltd., Uniphore Software Systems Pvt. Ltd., nViso SA, Smart Eye AB, Noldus Information Technology BV, Visage Technologies AB, Hume AI Inc., and Opsis Pte Ltd.Global Multimodal Affective Computing Market Trends and Insights

Major companies operating in the multimodal affective computing market are focusing on developing innovative solutions, such as multimodal AI platforms, to enhance emotion recognition accuracy by integrating facial, voice, and physiological data for more human-centric applications. A multimodal AI platform refers to an advanced system that combines and analyzes multiple data types, such as text, speech, facial expressions, and sensor signals, to better understand and interpret human emotions and behavior. For instance, in May 2025, Neurologyca, a Spain-based technology company, launched Kopernica to enable emotionally aware AI systems. It serves as an emotional operating system for AI, fusing multimodal data such as facial expressions (790+ body points), voice tone or rhythm, and behavior to detect up to 90 nuanced states such as stress, motivation, cognitive load, anxiety, or fatigue. Its advantages include neuroscience-trained precision outperforming competitors, on-device privacy processing without data storage, and seamless integration with LLMs for empathetic responses.What Are Latest Mergers And Acquisitions In The Multimodal Affective Computing Market?

In July 2025, Hume AI Inc., a US-based research lab and technology company, partnered with Groq Inc. to enable real-time, low-latency emotional AI. With this partnership, companies aim to deliver ultra-fast, emotionally intelligent speech-to-speech AI with sub-300 ms latency, natural prosody, and real-time empathy for scalable enterprise voice agents. Groq Inc. is a US-based artificial intelligence (AI) company that offers a specialized hardware and software platform focused on ultra-fast AI inference.Regional Insights

North America was the largest region in the multimodal affective computing market in 2025. Asia-Pacific is expected to be the fastest-growing region in the forecast period. The regions covered in this market report are Asia-Pacific, South East Asia, Western Europe, Eastern Europe, North America, South America, Middle East, Africa. The countries covered in this market report are Australia, Brazil, China, France, Germany, India, Indonesia, Japan, Taiwan, Russia, South Korea, UK, USA, Canada, Italy, Spain.What Defines the Multimodal Affective Computing Market?

The multimodal affective computing market consists of revenues earned by entities by providing services such as emotion recognition and analysis services, real-time affect monitoring services, and personalized user interaction services. The market value includes the value of related goods sold by the service provider or included within the service offering. The multimodal affective computing market also includes sales of AI-powered facial expression analysis tools, voice and speech emotion recognition systems, and wearable emotion and stress monitoring devices. Values in this market are ‘factory gate’ values; that is, the value of goods sold by the manufacturers or creators of the goods, whether to other entities (including downstream manufacturers, wholesalers, distributors, and retailers) or directly to end customers. The value of goods in this market includes related services sold by the creators of the goods.How is Market Value Defined and Measured?

The market value is defined as the revenues that enterprises gain from the sale of goods and/or services within the specified market and geography through sales, grants, or donations in terms of the currency (in USD unless otherwise specified). The revenues for a specified geography are consumption values that are revenues generated by organizations in the specified geography within the market, irrespective of where they are produced. It does not include revenues from resales along the supply chain, either further along the supply chain or as part of other products.What Key Data and Analysis Are Included in the Multimodal Affective Computing Market Report 2026?

The multimodal affective computing market research report is one of a series of new reports from The Business Research Company that provides multimodal affective computing market statistics, including multimodal affective computing industry global market size, regional shares, competitors with a multimodal affective computing market share, detailed multimodal affective computing market segments, market trends and opportunities, and any further data you may need to thrive in the multimodal affective computing industry. This multimodal affective computing market research report delivers a complete perspective of everything you need, with an in-depth analysis of the current and future scenario of the industry.Multimodal Affective Computing Market Report Forecast Analysis

| Report Attribute | Details |

|---|---|

| Market Size Value In 2026 | $8.11 billion |

| Revenue Forecast In 2035 | $14.41 billion |

| Growth Rate | CAGR of 15.2% from 2026 to 2035 |

| Base Year For Estimation | 2025 |

| Actual Estimates/Historical Data | 2020-2025 |

| Forecast Period | 2026 - 2030 - 2035 |

| Market Representation | Revenue in USD Billion and CAGR from 2026 to 2035 |

| Segments Covered | Component, Modality Type, Deployment Mode, Application, End User |

| Regional Scope | Asia-Pacific, Western Europe, Eastern Europe, North America, South America, Middle East, Africa |

| Country Scope | The countries covered in the report are Australia, Brazil, China, France, Germany, India, ... |

| Key Companies Profiled | Apple Inc., Google LLC, Microsoft Corporation, Samsung Electronics Co. Ltd., Sony Group Corporation, IBM Corporation, NVIDIA Corporation, Panasonic Corporation, Intel Corporation, SoftBank Group Corp., Qualcomm Technologies Inc., NEC Corporation, Xiao-I Robot Technology Co. Ltd., Uniphore Software Systems Pvt. Ltd., nViso SA, Smart Eye AB, Noldus Information Technology BV, Visage Technologies AB, Hume AI Inc., and Opsis Pte Ltd. |

| Customization Scope | Request for Customization |

| Pricing And Purchase Options | Explore Purchase Options |